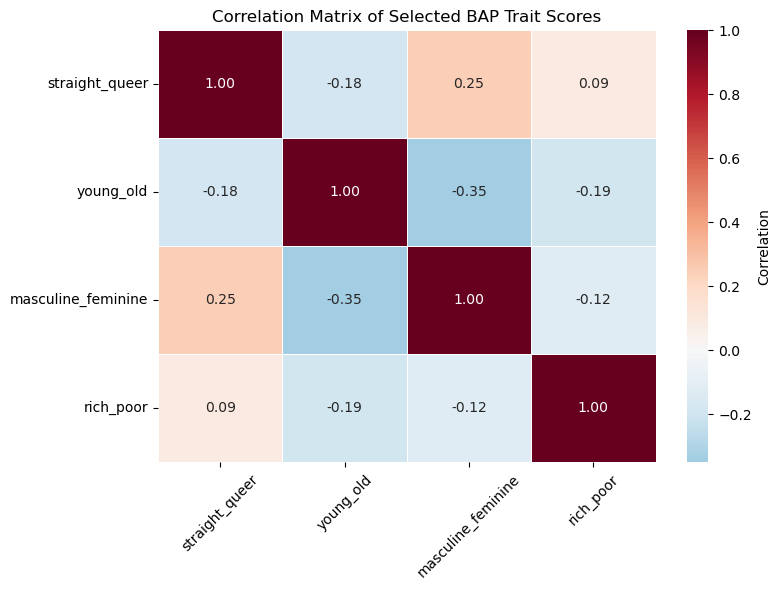

In Part 1, we started looking at some trends in the data. There are fun associations that fans would likely find amusing or obvious. But there are also associations that may suggest deeper cultural norms about how certain categories of people are depicted in fiction. In this section, we look at four dimensions that speak to important demographic categories: straight_queer, young_old, masculine_feminine, and rich_poor. It is important to note that, by categorizing characters based on respondents’ ratings on these dimensions, we are assessing how perceptions of these categories are related to perceptions of other dimensions.

Imports¶

# importing data libraries

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

import os

import plotly.express as px

import ipywidgets as widgets

from IPython.display import display

import random

from sklearn.preprocessing import StandardScaler

import finaltools as ft

import pingouin as pgReading the Data¶

# read processed data from part 1 notebook

char_score_data = pd.read_csv("data/processed/char_score_data.csv")

char_score_data.head()Association Visualization¶

# selected bap features/dimensions of interest

target_dimensions = ["straight_queer", "young_old", "masculine_feminine", "rich_poor"]

corr = char_score_data[target_dimensions].corr().round(2) # round to 2 decimals

# set up figure

plt.figure(figsize=(8, 6))

# create heatmap with diverging red-blue colors and annotations

sns.heatmap(

corr,

annot=True, # show correlation values

fmt=".2f", # 2 decimal places

cmap="RdBu_r", # diverging red-blue

center=0, # center at 0 for +/- interpretation

cbar_kws={'label': 'Correlation'},

linewidths=0.5

)

# labels and title

plt.xticks(rotation=45)

plt.yticks(rotation=0)

plt.title("Correlation Matrix of Selected BAP Trait Scores")

plt.tight_layout()

# save plot to visualizations folder

plt.savefig("visualizations/selected_dim_correlation_map.png", dpi=300, bbox_inches="tight")

# show plot

plt.show()

We see that the features are not very strongly (pairwise) correlated with each other. This is good as we don’t need to reduce dimensionality.

Assessing Individual Dimensions¶

While these dimensions are not strongly (pairwise) correlated with each other, they may be correlated with other dimensions in the data set. To start, we can standardize the data (through an array) and then turn it back into a pandas dataframe.

data_values = char_score_data.iloc[:, 3:]

scaler = StandardScaler()

data_values = scaler.fit_transform(data_values)

char_scores_scaled_df = pd.DataFrame(data_values)

char_scores_scaled_df.columns = char_score_data.iloc[:, 3:467].columns.to_list()Next, we create a 500x500 correlation matrix. While this would be a mess to visualize, we can select our target dimensions and see if if they are highly correlated with any other dimensions.

target_corrs = ft.target_mode_of_correlations(char_scores_scaled_df, target_dimensions)

target_corrs_df = pd.DataFrame(target_corrs)

target_corrs_dftarget_corrs_df.to_csv("data/target_correlations.csv", index=False)By social sciences standards, there are some strong correlations here. For example, straight_queer is inversely correlated with androgynous_gendered, which suggests that the more queer a character is, the more likely their depiction is androgynous. However, these correlations are not controlling for the influence of other dimensions. We can use the pingouin library to calculate the partial correlations, which show us correlations between dimensions while controlling for other dimensions.

target_pcorrs = ft.target_mode_of_correlations(char_scores_scaled_df, target_dimensions, mode = "pcorr")

target_pcorrs_df = pd.DataFrame(target_pcorrs)

target_pcorrs_dftarget_pcorrs_df.to_csv("data/target_partial_corr.csv", index=False)These coefficients are far less suggestive of strong relationships. However, given how many redundant dimensions we have in the data, this might simply be an issue of too much noise and too unsophisticated of a method. We can revist these questions after doing some dimension reduction in Part 3.